Conviction Cascades: Why people adopt beliefs without evidence or persuasion...(1.5.26)

Conviction Cascades...(1.5.26)

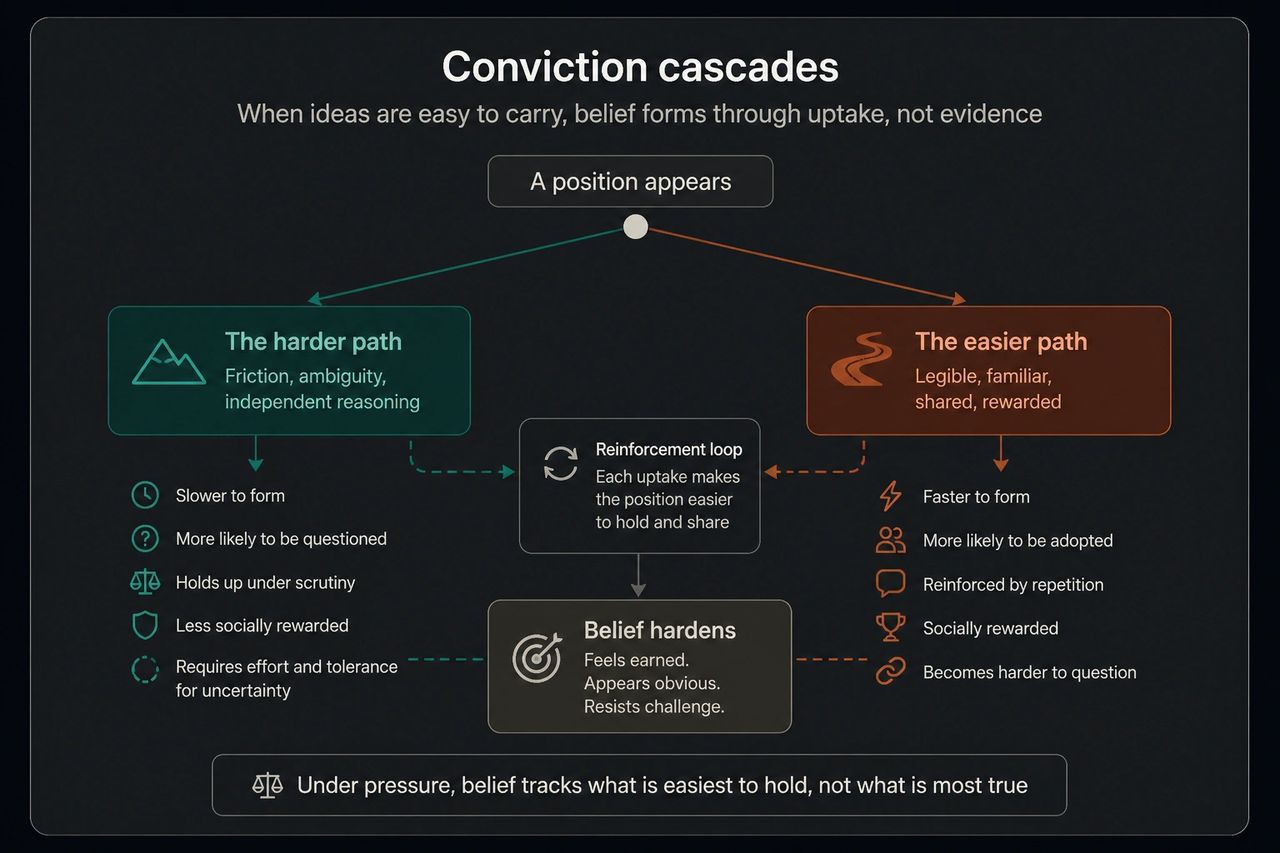

Why people adopt beliefs without evidence or persuasion

I recently came across a Substack piece called "Why Did Everyone Go Crazy All At Once?" It was asking the same kinds of questions I have spent the best part of two years trying to answer: how do ideas that most people privately find implausible come to dominate the professional classes, seemingly overnight, and with so little resistance?

His answer involves a concept called preference falsification, an idea from the economist Timur Kuran that has had a moment lately, particularly since Marc Andreessen started tweeting about it after the 2024 US election. The basic claim is elegant: people hide their real views under social pressure, and when the pressure lifts, the truth comes flooding out. A preference cascade. I think that is partly right, but it is not complete. It misses important parts of the mechanism.

Kuran's framework goes like this. People have private preferences that differ from their public statements. They lie, essentially, to avoid social costs. They know they are lying. The gap widens over time, especially under regimes that punish dissent. Then something eventually cracks, a few people speak up, others follow, and the whole edifice collapses because it turns out nobody believed it in the first place…

This is a useful model. It explains the fall of the Berlin Wall. It explains why people who were adding pronouns to their email signatures in 2020/21 were furtively removing them by 2024. And it explains some of what happened in the therapy profession too. I know colleagues who have sat silently through training and attended workshops they privately found absurd. Some of them have told me as much. That is preference falsification in its textbook form.

But stopping there treats the whole phenomenon as a story about lying, about people who knew the truth all along and simply lacked the courage to say it. And while that is of course true for some people, it does not describe what I have actually observed in most cases. Most of the people I have watched adopt these positions did not look like they were lying. They looked like they believed it.

There are, broadly, three accounts of how a society ends up marching in lockstep behind ideas that do not survive five minutes of honest scrutiny, and it’s important to delineate them as they require completely different responses.

The first is brainwashing: the ruling class saturates the culture with propaganda and the masses passively absorb it. This is condescending. It assumes people are empty vessels. It cannot explain why the most supposedly brainwashed people are often the most educated. And it conveniently flatters whoever is making the accusation, since they are apparently the only ones who managed not to be brainwashed.

The second is preference falsification: people know the ideas are nonsense but go along with them to avoid punishment. This is better. It respects intelligence and explains the speed of collapse when conditions change. But it assumes that the person maintaining the false position retains, somewhere inside, an accurate private map of reality.

The third is what I have called a conviction cascade. A conviction cascade is what happens when people come to hold a position they were never actually persuaded of in the first place. The position arrived pre-formed, was easy to “pick up and share” as it fit the social and professional environment they were operating in, and over time became indistinguishable from something they had worked out for themselves. The person is not lying. They believe it. But the belief did not form through encountering evidence, weighing it, and arriving at a conclusion. It formed instead because it was the easiest thing to believe given the conditions they were in.

Under pressure, belief maps most cleanly on to what is easiest to carry and share, not what is most true…

The mechanism starts with what I call legibility (this simply means one’s opinion is easy for others to read). In any professional environment where the topic is sensitive, time is limited, and people are watching their words, certain kinds of contribution are easier to make than others. A position that is already circulating, uses familiar language, and signals moral seriousness without requiring the group to slow down will be easy to read. It will be picked up, echoed, built upon. A position that requires careful framing, asks people to hold ambiguity, calls on data that seems to challenge the prevailing view, or introduces distinctions nobody has time for is harder to carry. Nobody will throw you out for raising it, but it costs more. It creates friction at the personal level. Most people, most of the time, will reach for the position that fits easily. Not because they are cowards. Because reaching for the easier position feels like professionalism, like reading the room, like knowing how to work with people. And it is…

But nonetheless, it’s how we come to accept as “truth” ideas that don’t map that well on to reality. Each time someone reaches for the legible position, it becomes a little more familiar and a little more settled. A little more like what we all think. Each uptake lowers the effort required for the next. The position compounds. Over time, the environment itself begins to do the work. People anticipate what will land easily before they speak and start to edit contributions before they are fully formed. They haven’t been told to do this but have learned through many small interactions what kinds of thinking move smoothly through the system. So, it is not censorship, and it is not quite self-censorship either... It is an ambient environmental change leading, under pressure, to a narrowing of what can be thought without effort. All this happens upstream of speech itself.

Now here is the part that separates this from preference falsification. In Kuran's model, the person knows they are faking it. They have a private preference that differs from their public one, which proves to be painful and ultimately causes pressure. When the pressure lifts, the truth comes pouring out. In a conviction cascade, this gap – believing one thing but saying another - closes. The person does not experience themselves as going along with something they disagree with. They experience themselves as having arrived at a reasonable position through reasonable means. After all, they have thought about it. They have discussed it with colleagues. Everyone they respect holds a similar view. Sensible voices in media confirm it. The conclusion therefore feels earned. But trace it back and the position was adopted because it was available, it fit, it reduced friction. And, crucially, in an urgent moral environment there was not the time to think it through. Each time it was encountered without challenge, it settled a little further. Each time it was used as a reference point, it acquired a little more weight.

I noticed this recently in an online supervision group I was part of. A therapist brought a formulation and I could hear the framework behind it, could trace it to the training culture that had produced it. There are courses in this country that explicitly centre anti-oppressive practice as, in one programme's words, 'not something added on as difference and diversity, but something that shapes how we teach and supervise.' A therapist who has trained in that environment does not experience their formulation as borrowed. It feels like clinical thinking, like something they arrived at through their own reasoning. The conviction is real. But the pathway to it was structural.

I should say that this is not a comfortable thing to notice about colleagues and the therapeutic space in which I operate. It would be much easier to believe they all arrived at their positions through careful thought. And I’m sure some of them did, even if people like me disagree strongly with the likely unintended consequences to therapy itself, and especially to clients. But watching the mechanism operate week after week, you start to develop an eye for which conclusions were reached based on clear thinking, and which ones have been ported in as they are easy and fashionable. The difference is not always flattering to the people involved. Including, occasionally, to me.

The practical difference from preference falsification is enormous. If the problem is preference falsification, the solution is relatively straightforward: reduce the social cost of dissent, create the conditions for honest expression, and wait for the truth to emerge. And in some corners of public life there are signs of this happening. People are saying things now that would have been unsayable two years ago, and the speed with which certain positions have been abandoned suggests they were never deeply held in the first place. But at what cost in the meantime?

But something else is going on. Not everyone who drops a position was hiding behind it. Some people who enthusiastically promoted these ideas genuinely believed them. And what we are seeing now is not a reversal but a polarisation. Two competing systems of conviction, each one hardening inside its own environment, each one produced by the same mechanism operating in different rooms. The people inside each system are not faking it. They believe what they believe.

But trace the pathway and the pattern is the same on both sides: the position that was available, that reduced friction, that was legible in the environment, became over time the position that was held. The content differs but it’s the same mechanism.

Clearly that leaves a third category. Those that doseem partially resistant to the pull of ‘closed systems’. Not immune, because nobody is immune, but some people seem to allow a little more friction and adjust less readily. I do not think this is about intelligence or courage, though both help. It is more often a structural accident. People who operate across multiple environments that run different cascades simultaneously are forced to notice the mechanism, because what is legible in one room is not legible in another. A therapist who also reads economics. A corporate professional who also makes art. Someone who lives in one culture and works in another. The incompatibility between cascades makes the machinery visible in a way that operating inside a single cascade never does. Resistance to conviction cascades is therefore not a character trait but a byproduct of inhabiting contradictory worlds, which is uncomfortable but useful, and which most institutional cultures are actively designed to prevent.

Let me be careful about what I am claiming. I am not saying people are stupid. The mechanism operates most effectively among conscientious, thoughtful, socially aware people. That is the whole point. I am not saying that all positions adopted through cascades are wrong. A position can be true and still arrive through a mechanism that has nothing to do with its truth. The problem is not the content. It is the process. A conclusion reached through ease of uptake rather than examination will be held with the wrong kind of confidence and abandoned with the wrong kind of speed. And I am not saying conviction cascades are avoidable. They are structural features of social coordination under pressure. I am inside them. So are you. The most any of us can do is recognise the mechanism, develop some feel for when our own convictions are being shaped by the channel rather than by the territory, and cultivate enough tolerance for friction that we do not always reach for whatever is easiest to carry.

The antidote, if there is one, is to allow more friction. More willingness to slow down when things feel settled. More tolerance for the person in the room who is saying something that does not quite fit. More suspicion of conclusions that arrived fully formed and never seemed to need defending, and whose proponents often struggle to defend them without resorting to slogans, pathologising dissent, or cliché.. And, above all, a basic humility about the origins of our own certainties. Most of us believe what we believe not because we examined the alternatives and found them wanting, but because what we believe was available, and easy, and the people around us believed it too.

The cascade is always running. The only question is whether you are aware of it, or whether you are inside it and do not know.

Comments

Post a Comment